The WordPress CMS offers a lot of crucial SEO benefits. Over the years, endless optimization by known developers has ensured that WordPress users don’t lack for SEO advantage.

Nonetheless, the users also need to do some tweaking to ensure they have a better search engine optimized site.

Keeping this in mind, today we will focus on how to improvise the WordPress robots.txt file for SEO benefits.

What is Robots.txt File?

Every WordPress user hears about the robots.txt file and often, it is used in their default functionalities, without any changes. Though the default version works well but modifying it will give a better advantage – SEO advantage ;).

Robots.txt file is like a gateway. Whenever the search engine bots visit a site, it accesses the robots.txt file first. This file intimidates the search engine bots regarding which pages to visit and index, and which one is not to be indexed.

Robots.txt is a web standard developed by Robots Exclusion Protocol (REP) to regulate the behavior of robots and search engine indexing.

In short, if there are certain pages on the site that shouldn’t be accessed and indexed by search engines, the robots.txt file is used to ensure they don’t.

Also, do remember that it is not mandatory for search engines to adhere to the commands given in the robots.txt file. The search engines may choose to bypass them, but usually, they don’t.

Can You Hide Robots.txt File?

No, you can’t.

Robots.txt is a completely available file for the public. Anyone can check what portions of a website are hidden by the webmaster.

It is easy to access the robots.txt file; there are no hidden URLs. Simply type the domain name and add “robots.txt” at the end of the URL (without quotes).

For example http://yourdomain.com/robots.txt

Some SEO experts suggest that robots.txt is not a recommended method to hide confidential information available on the site because search engine bots can still access them even if mentioned otherwise on the robots.txt file. Better and secure ways should be used such as password protection.

Structure of a Robots.txt File & SEO Modifications

Usually, an unmodified WordPress robots.txt file looks like this:

User-agent: *

Disallow: /wp-admin/

The * (asterisk) mark with ‘User-agent’ implies that all search engines are allowed to index the site. The ‘Disallow’ condition prevents the search engines to index some portions of the site like wp-admin, plugins, and themes because they are sensitive information and if indexing them is allowed, it will put the site at grave risk.

Now, what all SEO-based modifications can be done to a default robots.txt file?

Let’s see.

‘Disallow’ Usage

Only one URL with the ‘Disallow’ tag is allowed in every line. Don’t repeat the URLs as well. Remember that blocking a page or category or post with a robots.txt file only means that the search engine won’t crawl it.

It, however, doesn’t mean that search engines will not index the pages and not show them in results – it will. You can negate this possibility in the next step given below.

Meta Noindex

This is the safest and recommended method to stop search engines from indexing and displaying certain pages in search results.

How to add the Meta Noindex tag?

There are a lot of ways. The WordPress SEO plugin by Yoast is used as a reference. If you really want to create a strong SEO-adherent site, use this plugin.

The Yoast plugin lets you add the Meta Noindex tag in two ways:

- Page / Post Level

When you’re adding a post or a page, check out the Yoast SEO settings in the ‘Advanced’ section and you will find a list of options asking what is to be done with the page or post.

Simply select ‘Noindex’ and publish the post or page – the page won’t be indexed by the search engine. Simple?

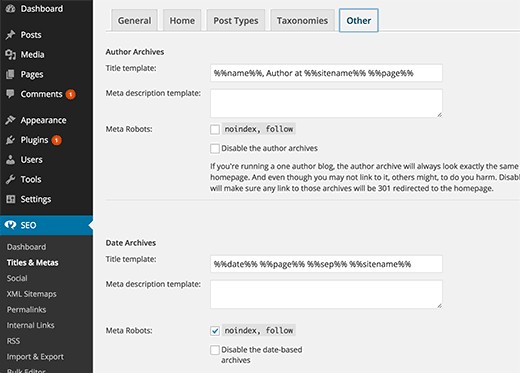

- Site Level

Check out the Yoast SEO ‘Title & Metas’ setting from the WordPress dashboard and you will find a list of taxonomies asking how they should be represented.

For instance, you may want to Noindex categories, tags, media files, affiliate links, author links, and so on. It’s your choice completely.

You can completely control the applicability of the Meta Noindex tag using these two methods.

NoFollow Links

You can ‘nofollow’ links to prevent search engines from indexing and displaying links, but it’s again not a foolproof strategy. Search engines can still discover the "nofollow" links.

To add the nofollow parameter, follow this:

<a href="URL" rel='nofollow'>Anchor Text</a>

Save the page or post and the link(s) will have the ‘nofollow’ parameter added.

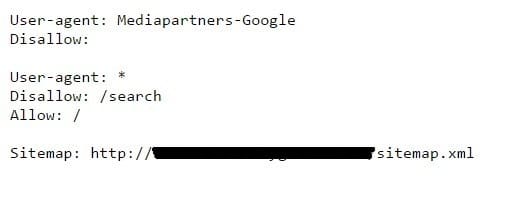

Should Sitemap be added to Robots.txt File?

A good SEO practice will be to add the sitemap link to the robots.txt file. In the earlier example, the sitemap wasn’t included, but if you check the sitemap of a Blogger blog, it will appear like this.

Adding the sitemap to robots.txt is a recommended practice.

To do this in WordPress, you need to edit the robots.txt file. You can add a single sitemap, or multiple ones (one sitemap per line). Once done, save the robots.txt file and you’re done.

How to Edit Robots.txt File?

You can access the robots.txt file via the following two methods:

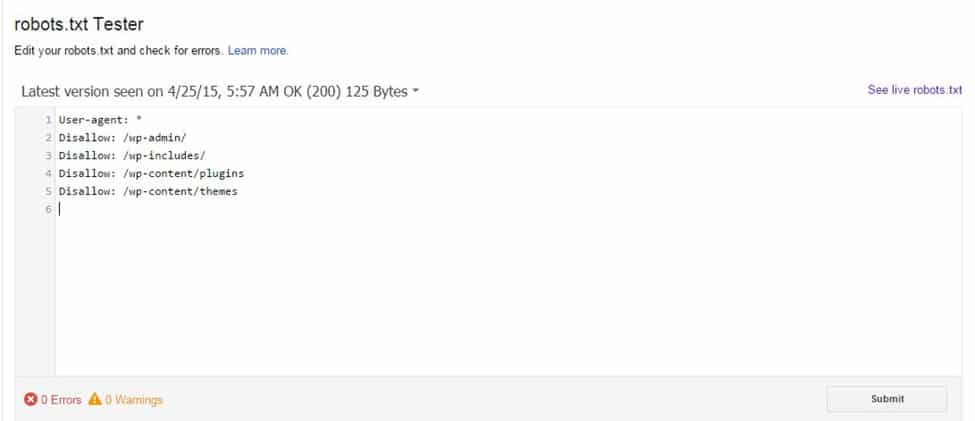

Google Webmaster Tools

Login to Google Webmaster Tools, choose the site, and click on ‘Crawl’. In the dropdown menu, you will see a "robots.txt tester". Add the sitemap URLs and click Submit.

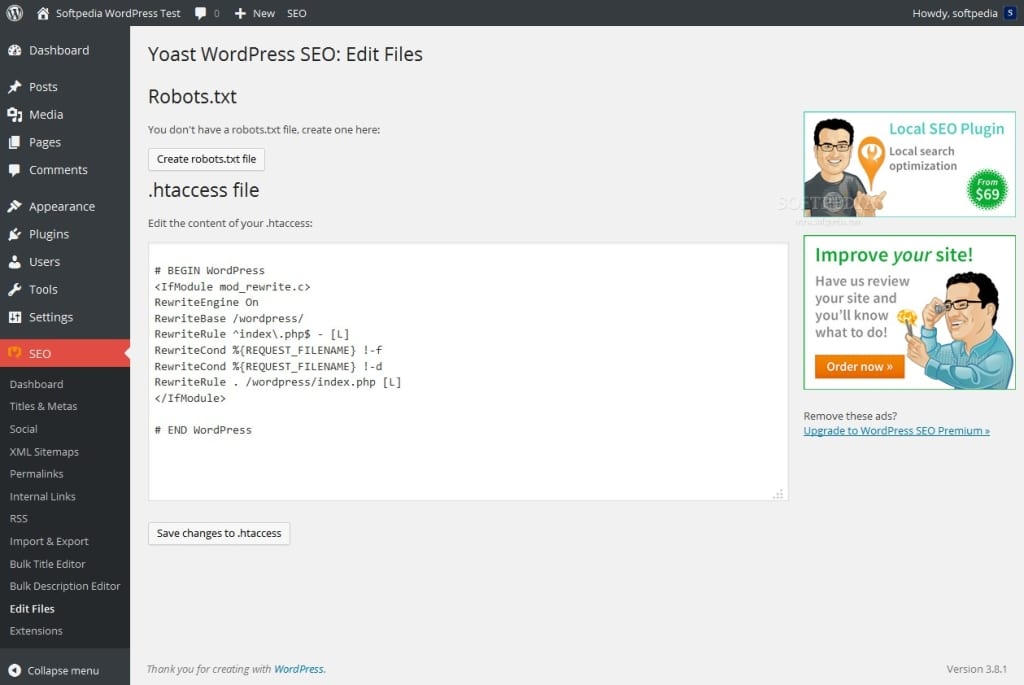

Yoast SEO Plugin

In the Yoast SEO dashboard, visit the Tools section and you will see the “File Editor” option to edit the robots.txt file.

Click on ‘create robots.txt file’, add your text and save it.

Ideal Robots.txt File Example

Let me show you one example of an ideal robots.txt file:

User-agent: *

Disallow: /cgi-bin/

Disallow: /wp-admin/

Disallow: /wp-content/plugins/

Disallow: /wp-content/cache/

Disallow: /wp-content/themes/

User-agent: Mediapartners-Google*

Allow: /

User-agent: Googlebot-Image

Allow: /wp-content/uploads/

Sitemap: http://www.yoursite.com/sitemap.xml

The above is a basic and safe version of robots.txt file that works for most of the WordPress sites.

Conclusion

Optimizing the robots.txt file becomes unavoidable as a website grows. You don’t want everything to be visible and accessible and as such, certain methods need to be employed. Modifying the robots.txt file is one of them.

We hope you found the tutorial informative. If you have any doubts or questions, feel free to ask us in the comment section - we will be happy to answer.

you guys should try Virtual robots.txt plugin very easy to edit the robots content

https://wordpress.org/plugins/virtual-robotstxt-littlebizzy/

maybe you interested……

very detailed and well explained article about Yoast SEO, but I am still confuse about the following topics in Yoast SEO, “Clean up the ” || “Add noodp meta robots tag sitewide” || “Redirect attachment URL’s to parent post URL”. These setting confuse me that what should i do for it and why so kindly explain these point’s of Yoast SEO.

How to optimize Robots.txt file for WordPress ?? Is sitemap included is robots.txt file is important for SEO or Indexing or not ? Simple example of SEO Optimize Robots.txt file ?